FlowMDM: Seamless Human Motion Composition with

Blended Positional Encodings

CVPR 2024

German Barquero, Sergio Escalera, and Cristina Palmero

University of Barcelona and Computer Vision Center, Spain

🔥 Demo

📌 Abstract

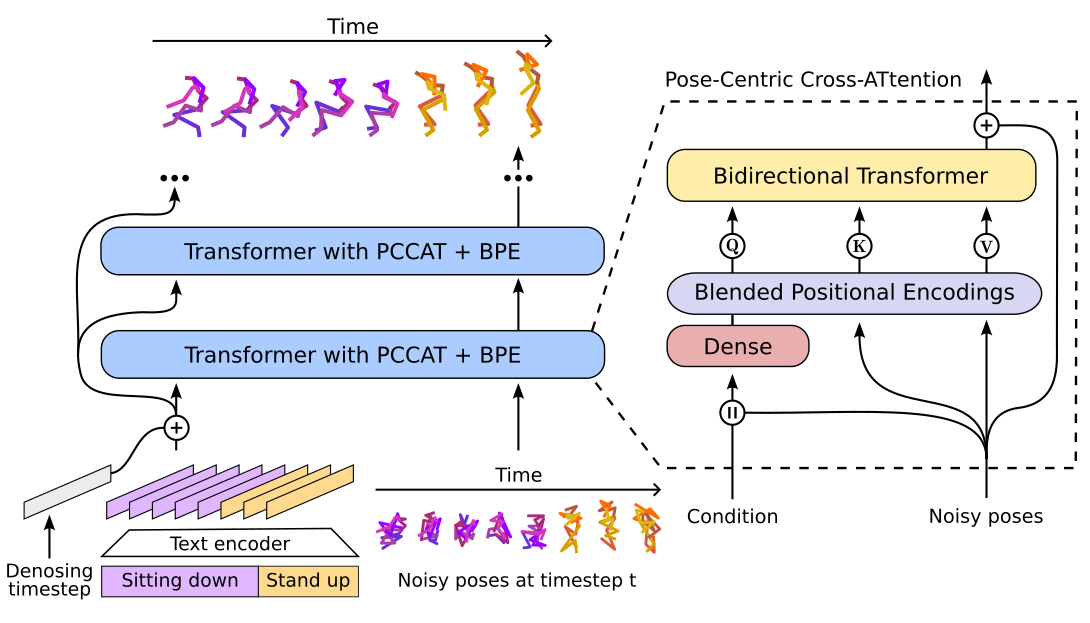

Conditional human motion generation is an important topic with many applications in virtual reality, gaming, and robotics. While prior works have focused on generating motion guided by text, music, or scenes, these typically result in isolated motions confined to short durations. Instead, we address the generation of long, continuous sequences guided by a series of varying textual descriptions.In this context, we introduce FlowMDM, the first diffusion-based model that generates seamless Human Motion Compositions (HMC) without any postprocessing or redundant denoising steps. For this, we introduce the Blended Positional Encodings, a technique that leverages both absolute and relative positional encodings in the denoising chain. More specifically, global motion coherence is recovered at the absolute stage, whereas smooth and realistic transitions are built at the relative stage. As a result, we achieve state-of-the-art results in terms of accuracy, realism, and smoothness on the Babel and HumanML3D datasets. FlowMDM excels when trained with only a single description per motion sequence thanks to its Pose-Centric Cross-ATtention, which makes it robust against varying text descriptions at inference time.

Finally, to address the limitations of existing HMC metrics, we propose two new metrics: the Peak Jerk and the Area Under the Jerk, to detect abrupt transitions.

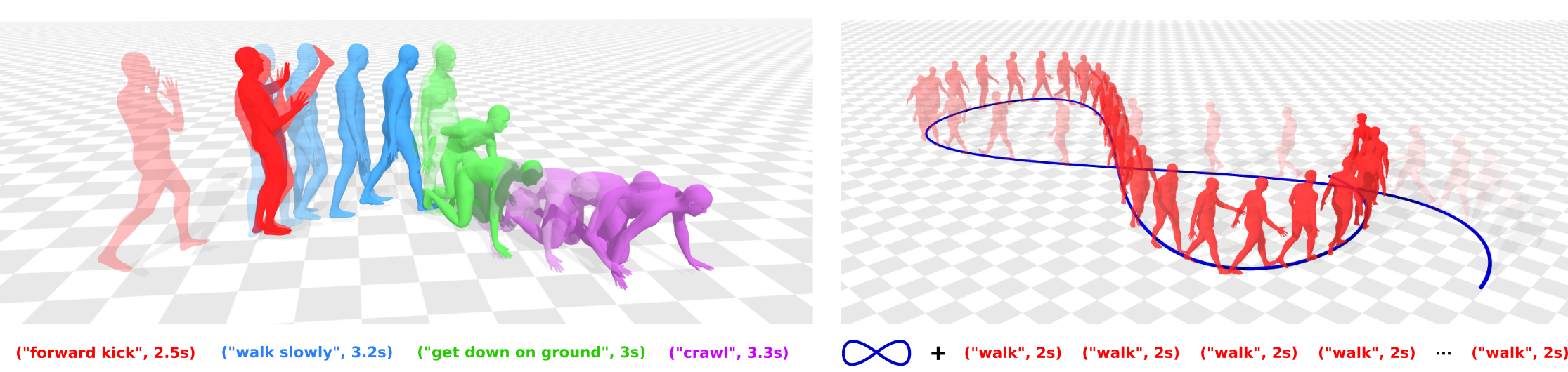

🎬 Human Motion Composition (🏃🏻♀️➜🚶🏻♀️➜🧎🏻♀️➜🧘🏻♀️)

Solid curves match the trajectories of the global position (blue) and left/right hands (purple/green). Darker colors indicate instantaneous jerk deviations from the median value, saturating at twice the jerk's standard deviation in the dataset (black segments). Abrupt transitions manifest as black segments amidst lighter ones.

🎬 Human Motion Extrapolation (🚶➜🚶➜🚶➜🚶)

🌟 Key contributions 🌟

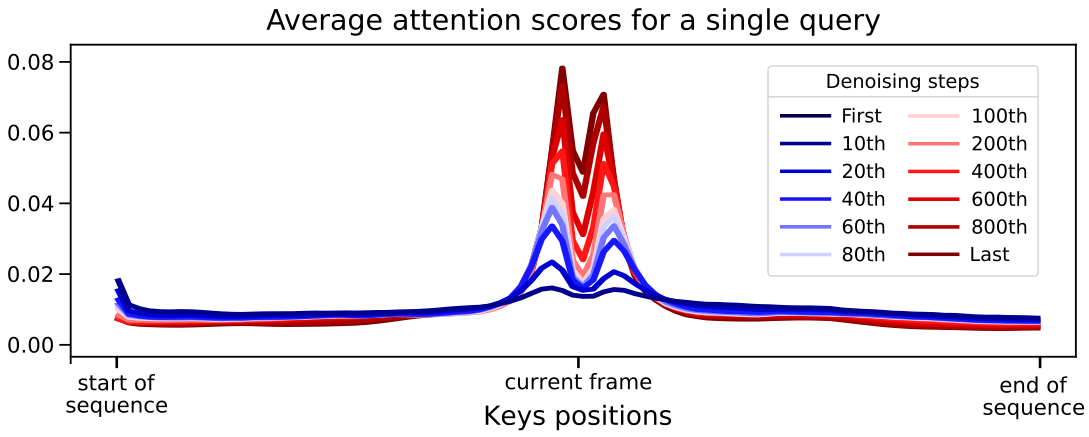

Key observation: at early denoising phases, motion diffusion models prioritize global inter-frame dependencies, shifting towards local relative dependencies as the process unfolds.

The BPE exploits this observation to seamlessly build human motion compositions from different textual descriptions:

1) At early denoising stages: absolute positional encodings + global attention restricted to each textual description.

2) At later denoising stages: relative positional encodings + unrestricted windowed attention.

Consequence: intra-subsequence global dependencies are recovered at the beginning of the denoising, and intra- and inter-subsequences motion smoothness and realism are promoted later.

How? To make the model understand APE and RPE at inference, we expose it to both encodings by randomly alternating them during training. As a result, the BPE schedule can be tuned at inference time to balance the intra-subsequence coherence and the inter-subsequence realism trade-off.

1) At early denoising stages: absolute positional encodings + global attention restricted to each textual description.

2) At later denoising stages: relative positional encodings + unrestricted windowed attention.

Consequence: intra-subsequence global dependencies are recovered at the beginning of the denoising, and intra- and inter-subsequences motion smoothness and realism are promoted later.

How? To make the model understand APE and RPE at inference, we expose it to both encodings by randomly alternating them during training. As a result, the BPE schedule can be tuned at inference time to balance the intra-subsequence coherence and the inter-subsequence realism trade-off.

The PCCAT minimizes the entanglement between the control signal (e.g., text, objects) and the noisy motion by feeding the former only to the query. Consequently, our model denoises each frame’s noisy pose only leveraging its own condition, and the neighboring noisy poses.

Consequence: the PCCAT makes our model robust against varying text descriptions at inference time, and it excels when trained with only a single description per motion sequence. → FlowMDM can be applied to both supervised and unsupervised human motion composition scenarios.

Consequence: the PCCAT makes our model robust against varying text descriptions at inference time, and it excels when trained with only a single description per motion sequence. → FlowMDM can be applied to both supervised and unsupervised human motion composition scenarios.

🥇 State-of-the-art composition and extrapolation 🥇

Solid curves match the trajectories of the global position (blue) and left/right hands (purple/green). Darker colors indicate instantaneous jerk deviations from the median value, saturating at twice the jerk's standard deviation in the dataset (black segments). Abrupt transitions manifest as black segments amidst lighter ones. FlowMDM exhibits the most fluid motion and preserves the staticity or periodicity of extrapolated actions, in contrast to other methods that show spontaneous high jerk values and fail to keep the motion coherence in extrapolations.

🔗 BibTeX

@article{barquero2024seamless,

title={Seamless Human Motion Composition with Blended Positional Encodings},

author={Barquero, German and Escalera, Sergio and Palmero, Cristina},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

year={2024}

}